“ ‘…If you were entering any field now and you had your choice, what field would you go in?’ [Bill] and I both would go into the intersection of biology and computer science. When it comes to that field, we are only at the beginning.” -Melinda Gates.

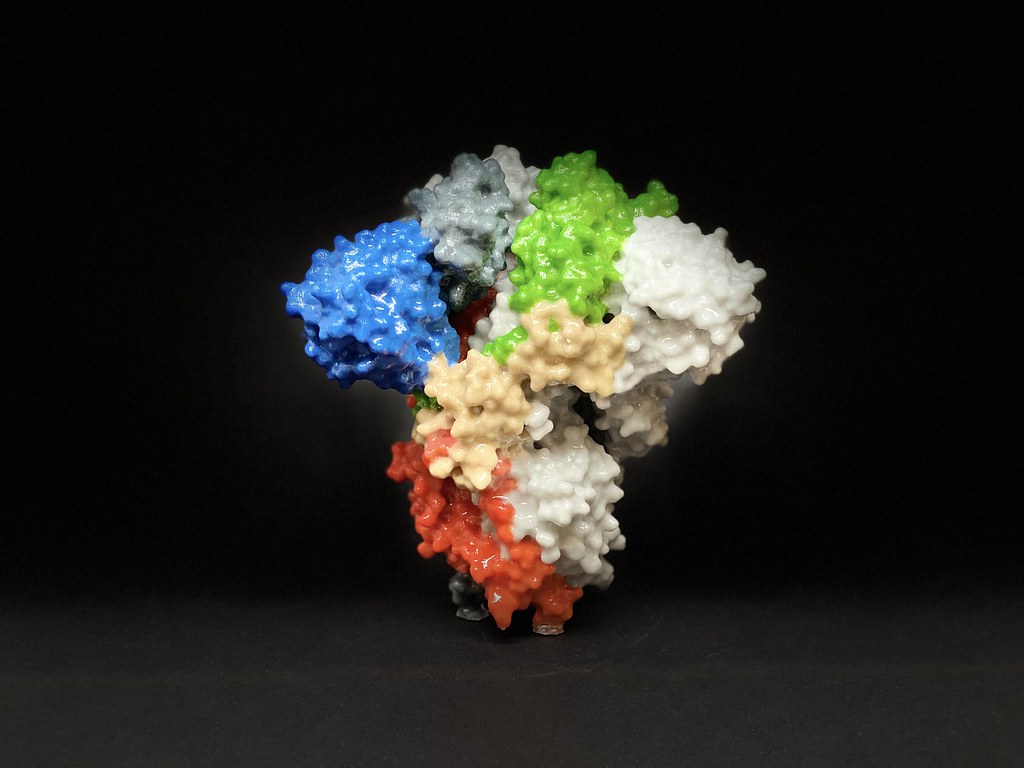

Dr. Jamie Schiffer, teaching the course Introduction to Bioinformatics, began class with this quote from Melinda Gates. Like Gates and Dr. Schiffer enthusiastically said in class, this season of quarantine is a prime time for bioinformatics. Recently, we saw a hallmark achievement performed by Google’s Deepmind artificial intelligence (AI), which received a score of 92.4 in the Global Distance Test (GDT) of the Critical Assessment of Techniques for Protein Structure Prediction (CASP). The GDT measures the similarity of two protein structures with known amino acid sequences, one structure usually derived from protein structure predictions, and the other from an experimental imaging method. CASP, the “competition” of softwares predicting protein structure, annually posts a protein sequence of unknown structure and compares the rendered protein structure predictions with the experimental data that is hidden from public viewing until after the competition.

In simpler terms, the algorithm AlphaFold–developed by Google’s Deepmind–was able to accurately predict the folding of a protein. Protein structure is unique to each sequence of amino acids, as a protein’s shape is determined by the complex interactions between the amino acids. For a better understanding, a structure with a score of 90, according to CASP, is considered an experimental structure, using x-ray crystallography and cryo-electron microscopy, which is the reference used to score the computer-generated models. From this, scientists are now closer to predicting the shape of a protein and understanding the interactions between amino acid structures. This major advancement of AI brings biologists one step closer to understanding and predicting protein structures for a more accurate assessment of its function and druggability.

Artificial Intelligence is becoming an increasingly important resource in our lives, with its services growing exponentially in function. AI has also made its way into the medical field. Watson, which was barely able to summon encyclopedic answers during its developmental stage, is now being used to aid physicians in accurately diagnosing patients with their test results. AI achieves this by learning about the data, either by recognizing patterns, or forming patterns with guidance.

Explaining how an AI functions could make a whole article of its own. However, a glimpse of machine learning can be observed through this software, developed by the Electronics & Biomedical Intelligent Systems (EBItS) Research Group in Universiti Malaysia Perlis. This particular program is used to detect malaria-infected cells. For now, the economical and reliable detection of malaria infection is done through microscopically examining a blood sample from a patient. This process is arduous and requires a microbiologist to manually search for the malaria parasite in the sample by using a light telescope. AI speeds up this process by intaking an image of colorized blood slides, determining which one looks “different” from the rest through machine learning, by identifying the different blood cells under different coloration of the slide image.

Similar AIs are also used in the diagnosis of COVID-19 pneumonia and interpretation of diagnostic scans. These algorithms help in more accurately reading and cross-referencing data with thousands of patients’ cases for an accurate diagnosis of disease. In this case, every doctor utilizing the algorithm and database had the “experience” of treating thousands of similar patients in a short amount of time, something that was previously only available through years of medical field experience.

As an aspiring pre-med, like many biology students at UCSD, I naturally felt uneasy about the future of medicine. With a rise in AIs replacing human positions, I couldn’t help but ask myself: “Will human doctors become unessential in the future?” After much thought, however, my answer is no, because there is still a case for something that AI fundamentally lacks–empathy and creativity in the medical field.

Empathy is the ability to feel others’ discomfort and pain as one’s own. This ability is not unique to humans, as some other mammals experimentally showed some abilities of shared pain. In humans, empathetic feelings come from the anterior insula, the part of the brain responsible for processing disgust in response to olfactory or visual stimuli, or even imagining contamination or mutilation.

While the exact reason for the wide variety of functions is debated, one of the popular theories suggests that the insula may be involved with the ‘somatic marker’ hypothesis, which suggests that the anterior insula uses “gut-feelings”–like a sudden eeriness in the dark–to make decisions, for the example: to turn on the light. In essence, this part of the brain is wired to make decisions to protect itself from the perception of danger. This decision process is also extended to others, thus causing the expression of empathy, when the brain is stimulated by apparent harm to others.

Although AI technology has greatly improved in the last decade, empathy still remains a process heavily dependent on biology that AI cannot authentically produce. Empathy is especially important in physician-patient relationships, as it is necessary to build good rapport. As experts in providing a “cure” for sickness, the simple act of a physician showing empathetic behaviors toward patients shows immediate results. In a review of health outcomes and physician-patient communication, three out of four analytic studies revealed a statistically significant increase in patients’ symptoms being relieved when the physician had intentionally spent extra time to hear and answer the patients’ concerns. In one case of treating headaches, patients were 3.4 times more likely to report symptoms treated if the patients felt that they had discussed the issue thoroughly with the physician. This valuable relationship that arises, the comfort of telling someone about problems, is something that can only be done by a human doctor. Current medical AI works more as a database of recommendations for physicians rather than a physician program that can facilitate a patient visit.

Despite the advances in AI, programs mimicking human empathy are severely lacking. In some cases, the AI’s simulated empathy leads its users to be unkind to the bot. A case study by the National Health Service (NHS) of Great Britain implemented a chatting bot to make appointments for patients. According to the NHS, their goal was to allow quicker appointments to be made depending on the seriousness of the symptoms inputted. However, their focus group results revealed that the patients actually tried to take advantage of the chat box by exaggerating their symptoms to see a physician more quickly. Reports concluded that the NHS abandoned the pilot program.

Dr. Liraz Margalit, a social psychologist who specializes in behavioral design and decision making, explains that this behavior arises from the “artificial” interaction between the bot and humans. In essence, the interaction between the two is closer to a command than a conversation. Because there is no social obligation and the users know that they do not need to feel empathetic toward the bot, they are more inclined to lie and benefit from the algorithm than to use its proper functions. In such cases, empathy is also needed from the patients’ side as well; for the most accurate diagnoses, the most honest symptoms are crucial for an AI.

When asked if she believed that AIs will replace human doctors, Dr. Schiffer readily replied with a firm “no.” Her rationale was simple: Artificial Intelligence was created for humans to make better decisions, not to replace humans. She used the example of artificial intelligence in chess to explain. After the defeat of grandmaster Garry Kasparov by IBM’s computer chess AI Deep Blue , Kasparov used the power of AI to develop an advanced form of chess called “Centaur Chess” where a player collaborates with the computing powers. These chess players often beat standalone chess engines, because the human player is trained to collaborate with chess player AI for the best strategies, sometimes intervening on the AI’s recommendations with the players’ own intuition, giving the pair the winning edge.

Such is the case for medicine as well. There are parts of medical AI that are severely lacking to be fully competent in replacing a physician, such as empathetic responses. However, in areas such as optimizing operating room efficiency or cross-referencing a patient’s symptoms with thousands of past medical data to determine the possibility of infection, AIs can serve immediately to the resources available to save human lives. At the end of the day, Artificial Intelligence was created to help us, not to replace us. Having AIs assist in diagnosing patients, and even more importantly, understanding the development and inner workings of such algorithms, would give medical doctors the winning edge against treating difficult symptoms. For pre-meds entering the medical field, embracing, but not relying on the new binary helper would definitely up our game against the fight for patients’ health.

On that note, BIMM 143 was a great course that gave me an excellent and solid intro to bioinformatics coding. I recommend it for all students, 10 out of 10 stars.

A special thanks to Dr. Jamie Schiffer for teaching an awesome Winter Quarter of BIMM 143 guiding me through the topic and checking the accuracy of the article.

Work cited

Cover art

https://search.creativecommons.org/photos/be6a1d1f-2d14-4d0e-ae9b-9bf1875fc9a2

Giant Chess

https://search.creativecommons.org/photos/c6eb6f19-8012-40a6-91cc-aee2f3763b81

Deepmind AI

https://www.nature.com/articles/d41586-020-03348-4

Watson

https://www.ibm.com/watson-health

COVID-19 diagnosis AI

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC7196900/

Malaria

Protein misfolding

https://febs.onlinelibrary.wiley.com/doi/abs/10.1111/j.1742-4658.2006.05181.x

AI Cardiovascular Imaging

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC7350824/

Mice empathy

https://pubmed.ncbi.nlm.nih.gov/16809545/

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC5799121/

Doctor empathy helps improve patients

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC1337906/?page=5

Empathy origin

https://www.ncbi.nlm.nih.gov/pmc/articles/PMC5799121/

https://pubmed.ncbi.nlm.nih.gov/19096369/

Oxytocin healing

https://pubmed.ncbi.nlm.nih.gov/32603799/

https://www.vr-elibrary.de/doi/10.13109/zptm.2005.51.1.57

Chatbox appointment

Chatbox rudeness

https://www.psychologytoday.com/us/blog/behind-online-behavior/201607/the-psychology-chatbots

Antimicrobial Resistance AI

https://bmcmedinformdecismak.biomedcentral.com/articles/10.1186/s12911-017-0550-1